From its humble beginnings with just a handful of staff housed in modest surroundings to becoming one of the leading academic supercomputing centers in the world, the Texas Advanced Computing Center (TACC) has not stopped evolving since opening its doors in June 2001.

That year, TACC’s first supercomputer boasted the capability to do 50 gigaflops, or 50 billion calculations per second (the average home computer today can do around 60 gigaflops). In 2021, the center’s most powerful supercomputer, Frontera, was 800,000 times more powerful.

High-performance computing (HPC) had in fact already been at UT long before TACC was established. Its precursor, the Center for High Performance Computing, dates back to the 1960s. But things have dramatically improved since then, as J. Tinsley Oden — one of UT’s most decorated scientists, engineers and mathematicians — explains. Oden is also widely considered to be the father of computational science and engineering.

“I came to the university for the first time in 1973 as a visitor,” he says. “The computing was still very primitive, even by the standards of the time. People were using desk calculators, which shocked me.” Oden was central to nurturing an HPC-friendly environment in Texas. But supercomputing was beginning to grow exponentially nationwide also.

What is supercomputing?

The term “supercomputing” refers to the processing of massively complex or data-laden problems using the compute resources of multiple computer systems working in parallel (i.e., a supercomputer). Supercomputing also denotes a system working at the maximum potential performance of any computer. Its power can be applied to weather forecasting, energy, the life sciences and manufacturing.

Despite being a top-tier university, enthusiasm for supercomputing at UT ebbed and flowed over the years. This meant the HPC headquarters shifted locations across campus (including a brief stint where operations were conducted out of a stairwell in the UT Tower). “It’s remarkable how far things have come since then,” Oden says.

Calls from HPC advocates — Oden and many others — had grown so loud during the 1980s and 1990s that university leaders couldn’t ignore them any longer.

“When I became president of UT in 1998, there was already a lot of discussion around the need to prioritize advanced computing — or ‘big iron’ as we called it back then — at the university,” says former UT President Larry Faulkner.

In 1999, Faulkner named Juan M. Sanchez as vice president for research; that appointment proved central to the TACC story. Instrumental in a variety of university initiatives, Sanchez echoed the calls from HPC advocates to build a home for advanced computing at UT.

In 2001, a dedicated facility was established at UT’s J.J. Pickle Research Campus in North Austin with a small staff led by Jay Boisseau, who influenced the future direction of TACC and the wider HPC community. TACC grew rapidly in part due to Boisseau’s acceptance of hand-me-down hardware, an aggressive pursuit of external funding, and success forging strong collaborations with technology partners, including notable hometown success story Dell Technologies. The center also enjoyed access to a rich pipeline of scientific and engineering expertise at UT.

One entity in particular became a key partner — the Institute for Computational Engineering and Sciences (ICES, established in 2003). Renamed the Oden Institute for Computational Engineering and Sciences, in recognition of its founder J. Tinsley Oden, the institute quickly became regarded as one of the leading computational science and engineering (CSE) institutes in the world.

Thanks to the unwavering support of Texan educational philanthropists Peter and Edith O’Donnell, Oden was able to recruit the most talented computational scientists in the field and build a team that could not only expand the mathematical agility of CSE as a discipline but also grow the number of potential real-world applications.

“ICES was such a successful enterprise, it produced great global credibility for Texas as a new center for HPC,” Faulkner says.

Computational science and advanced computing tend to move forward symbiotically, which is why Oden Institute faculty have been instrumental in planning TACC’s largest supercomputers, providing insights into the types of computing environment that researchers require to deliver impactful research outcomes.

“Computational science and engineering today is foundational to scientific and technological progress — and HPC is in turn a critical enabler for modern CSE,” says Omar Ghattas, who holds the John A. and Katherine G. Jackson Chair in Computational Geosciences with additional appointments in the Jackson School of Geosciences and the Department of Mechanical Engineering.

Ghattas has served as co-principal investigator on the Ranger and Frontera supercomputers and is a member of the team planning the next-generation system at TACC.

“Every field of science and engineering, and increasingly medicine and the social sciences, relies on advanced computing for modeling, simulation, prediction, inference, design and control,” he says. “The partnership between the Oden Institute and TACC has made it possible to anticipate future directions in CSE. Having that head start has allowed us to deploy systems and services that empower researchers to define that future.”

We were hungry to win the Ranger contract and, against the odds, it paid off.

No(de) time for complacency

Over its first 10 years, TACC deployed several new supercomputers, each larger than the last, and gave each a moniker appropriate to the confident assertion that everything is bigger and better in Texas: Lonestar, Ranger, Stampede, Frontera.

While every system was and still is treated like a cherished member of TACC’s family, current Executive Director Dan Stanzione doesn’t flinch when asked to pick a favorite child. “We really got on the map with Ranger,” says Stanzione, who was a co-principal investigator on the Ranger project.

The successful acquisition of the Ranger supercomputer in 2007, the first “path to petascale” system deployed in the U.S., catapulted TACC to a national level of supercomputing stardom. At this point, Stanzione was the director of high performance computing at Arizona State University but was playing an increasingly important role in TACC operations.

Ranger was slated to be the largest open science system in the world at that time, and TACC was still a relatively small center compared with other institutions bidding for the same $59 million National Science Foundation (NSF) grant. TACC’s chances of successfully winning the award had its fair share of skeptics.

However, Stanzione, who officially joined TACC as deputy director soon after the successful Ranger bid, and Boisseau were a formidable pair. “TACC has a reputation for punching above its weight,” Oden says. “Jay and Dan just knew how to write a proposal that you simply could not deny.”

Stanzione continued the winning approach when he took over as executive director in 2014. In addition, he has held the title of associate vice president for research at UT since 2018. “Juan Sanchez also made the decision to appoint Stanzione as Jay Boisseau’s successor,” Faulkner noted. “And while the success of TACC has, of course, come from those actually working in the field, Sanchez deserves great credit for establishing an environment where that success could thrive.”

In particular, the center stands out for the entrepreneurial culture it has cultivated over the years. Stanzione describes the approach as being akin to a startup. “We were hungry to win the Ranger contract and, against the odds, it paid off.”

The formula for success

TACC has kept its edge for 20 years by prioritizing the needs of researchers and maintaining strong partnerships.

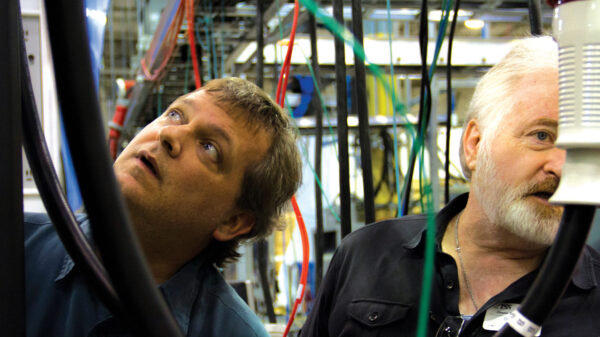

As important as TACC systems are as a resource for the academic community, it’s the people of TACC that make the facility so special. “They‘re not just leaders in designing and operating frontier systems; they also support numerous users across campus, teach HPC courses and collaborate on multiple research projects,” Ghattas says.

The center’s mission and purpose has always focused on the researcher and enabling discovery in open science. A list of advances would include forecasting storm surge during hurricanes, confirming the discovery of gravitational waves and identifying one of the most promising new materials for superconductivity.

But in recent decades, the nature of computing and computational science has changed. Whereas researchers have historically used the command line to access supercomputers, today a majority of scholars use supercomputers remotely through web portals and gateways, uploading data and running analyses through an interface that would be familiar to any Amazon or Google customer. TACC’s portal team — nonexistent at launch — is now the largest group at the center, encompassing more than 30 experts and leading a nationwide institute to develop best practices and train new developers for the field.

Likewise, life scientists were a very small part of TACC’s user base in 2001. Today, they are among the largest and more advanced users of supercomputers, leveraging TACC systems to model the billion-atom coronavirus or run complex cancer data analyses.

The physical growth of data in parallel with rapid advances in data science, machine learning and artificial intelligence marked another major shift for the center, requiring new types of hardware, software and expertise that TACC integrated into its portfolio.

“The Wrangler system, which operated from 2014 to 2020, was the most powerful data analysis system for open science in the U.S.,” Stanzione says. “Two current systems, Maverick and Longhorn, were custom-built to handle machine and deep learning problems and are leading to discoveries in areas from astrophysics to drug discovery.”

With almost 200 staff members, TACC’s mission has grown well beyond servicing and maintaining the needs of the big iron. It also includes large, active groups in scientific visualization, code development, data management and collections, and computer science education and outreach.

The next decades

In 2019, TACC was successful in its bid to build and operate Frontera, a $120 million NSF-funded project that created not just the fastest supercomputer at any university worldwide, but one of the most powerful systems on the planet. (It was No. 13 on the November 2021 TOP500 list.)

The NSF grant further stipulated that TACC would develop a plan for a leadership-class computing facility (LCCF), which would operate for at least a decade and deploy a system 10 times as powerful as Frontera.

TACC now has an opportunity not just to build a bigger machine but to define how computational science and engineering progress in the coming decade. “We’ll help lead the HPC community, particularly in computational science and machine learning, which will both play greater roles than ever before,” Stanzione says.

“The LCCF will be the open science community’s premier resource for catalyzing a new generation of research that addresses societal grand challenges of the next decade,” Ghattas says.

The design, implementation and management of the next system, as well as a new facility, mean that TACC could be about to experience its most transformative period to date. And that change presents one final question: If TACC has made it this far since 2001, what might we be celebrating at its 40th anniversary?

“I don’t know what TACC will look like two years from now,” Stanzione says. “But in another 20 years, I hope it looks entirely different. Then at least I’ll know the center’s legacy has persisted.”

This article was originally published in Texascale, the magazine of the Texas Advanced Computing Center.